Generative Adversarial Networks

Introduction to Style Transfer, VAE, GAN, Cartoonization, Super-resolution, Inpaint Anything, 3D Avatar, NeRF.

Style Transfer

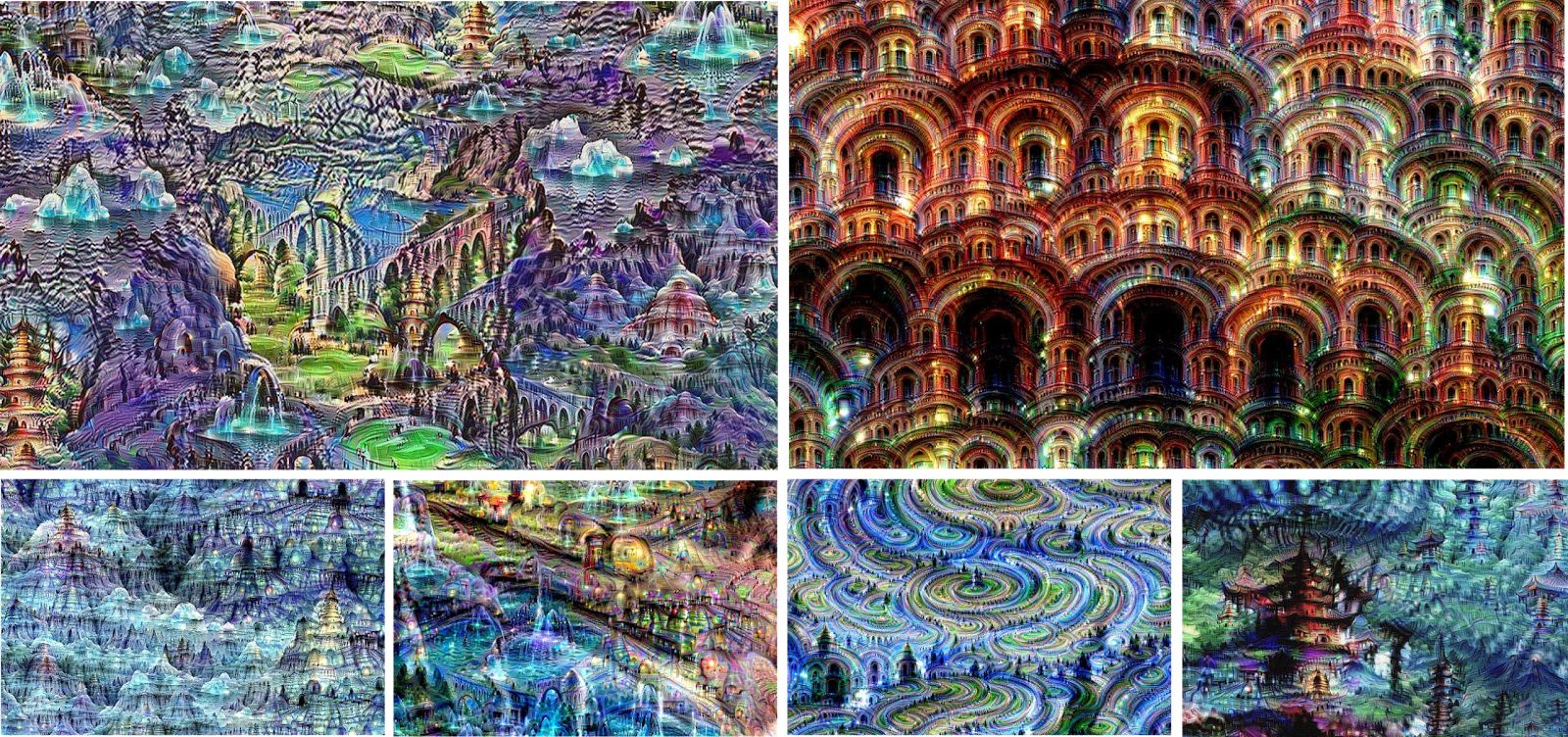

DeepDream

Blog: Inceptionism: Going Deeper into Neural Networks

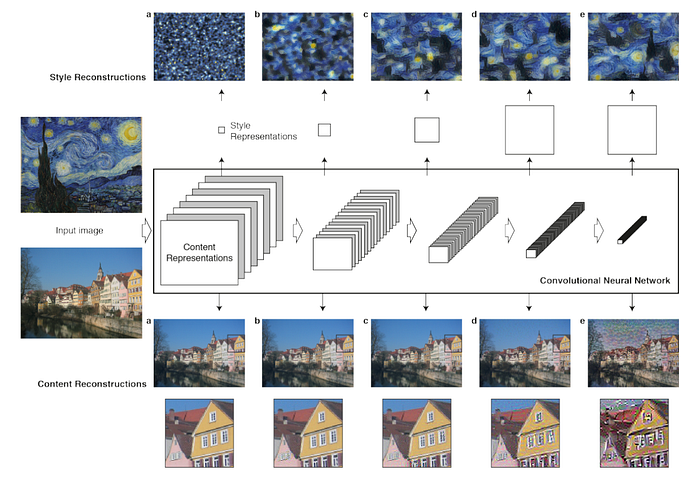

Nerual Style Transfer

Paper: A Neural Algorithm of Artistic Style

Code: ProGamerGov/neural-style-pt

Tutorial: Neural Transfer using PyTorch

Fast Style Transfer

Paper:A Neural Algorithm of Artistic Style & Perceptual Losses for Real-Time Style Transfer and Super-Resolution

Code: lengstrom/fast-style-transfer

|

|

|

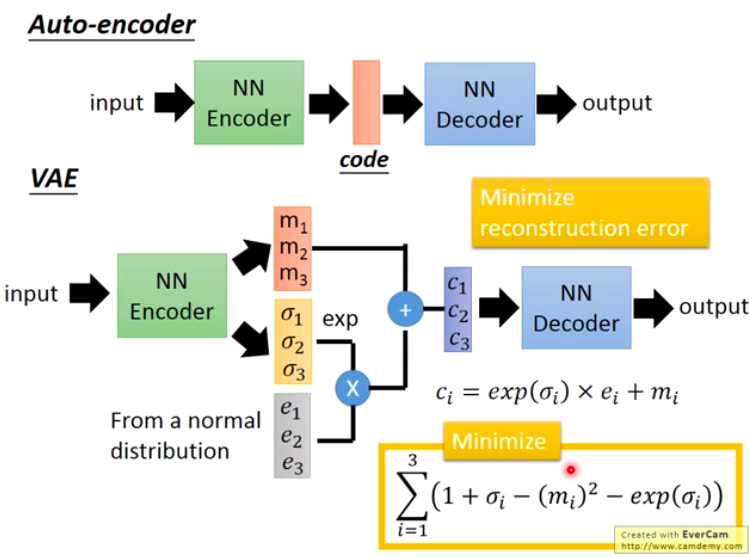

Variational AutoEncoder (VAE)

VAE

Blog: VAE(Variational AutoEncoder) 實作

Paper: Auto-Encoding Variational Bayes

Code: rkuo2000/fashionmnist-vae

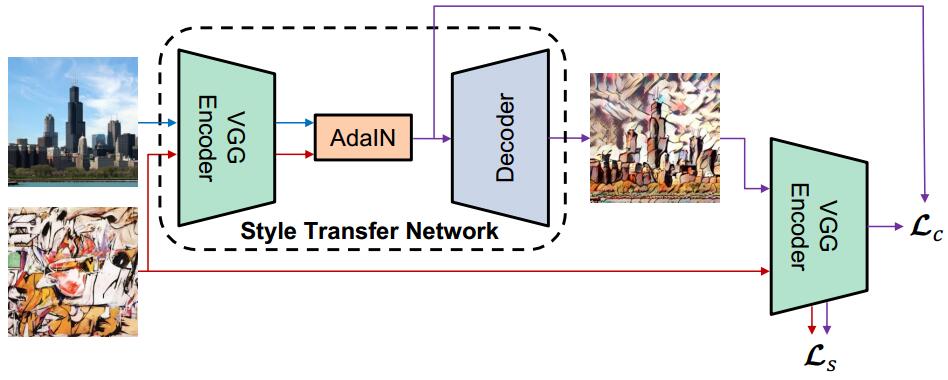

Arbitrary Style Transfer

Paper: Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization

Code: elleryqueenhomels/arbitrary_style_transfer

|

|

|

|

|

|

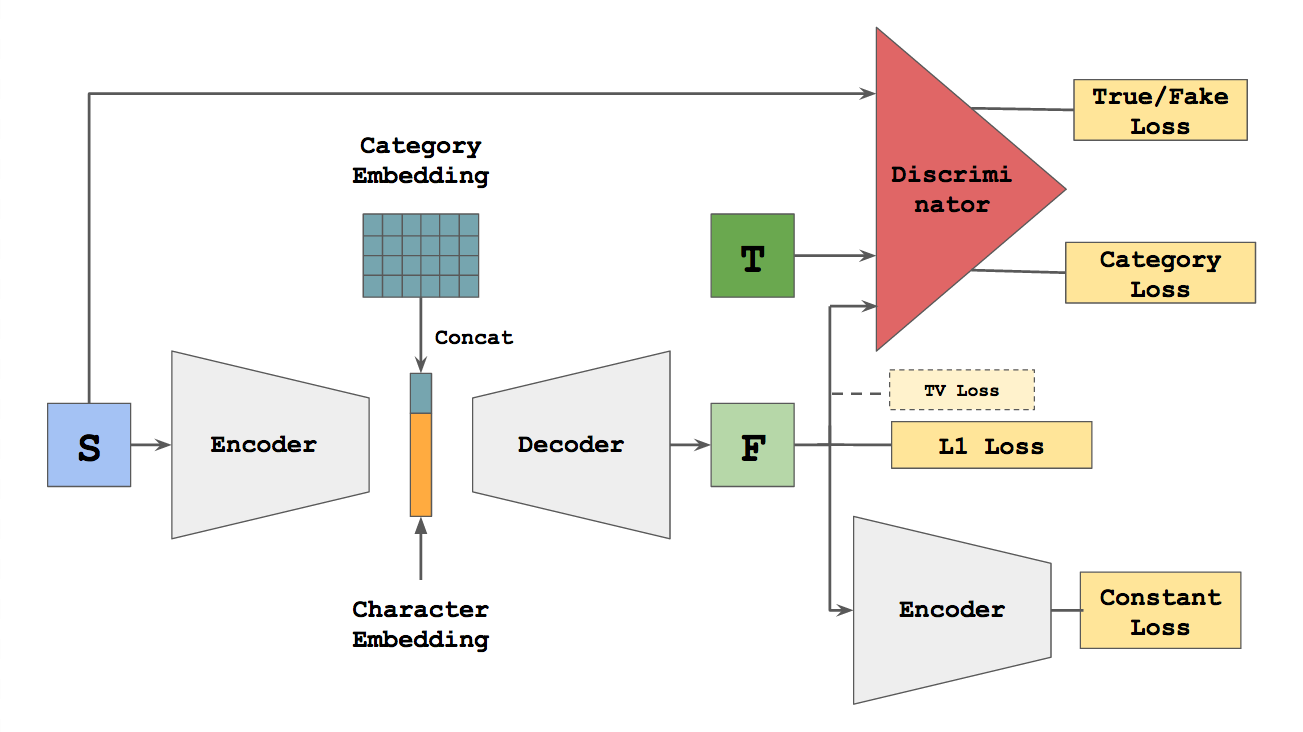

zi2zi

Blog: zi2zi: Master Chinese Calligraphy with Conditional Adversarial Networks

Paper: Generating Handwritten Chinese Characters using CycleGAN

Code: kaonashi-tyc/zi2zi

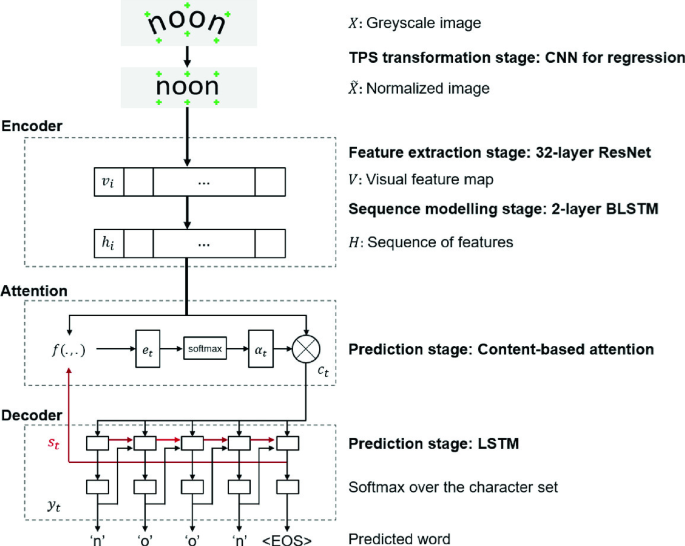

AttentionHTR

Paper: AttentionHTR: Handwritten Text Recognition Based on Attention Encoder-Decoder Networks

Github: https://github.com/dmitrijsk/AttentionHTR

Kaggle: https://www.kaggle.com/code/rkuo2000/attentionhtr

GAN - Generative Adversarial Networks (生成對抗網路)

Paper: https://arxiv.org/abs/1406.2661

Blog: A Beginner’s Guide to Generative Adversarial Networks (GANs)

G是生成的神經網路,它接收一個隨機的噪訊z,通過這個噪訊生成圖片,為G(z)

D是辨别的神經網路,辨别一張圖片夠不夠真實。它的輸入參數是x,x代表一張圖片,輸出D(x)代表x為真實圖片的機率

class GAN():

def __init__(self):

self.img_rows = 28

self.img_cols = 28

self.channels = 1

self.img_shape = (self.img_rows, self.img_cols, self.channels)

optimizer = Adam(0.0002, 0.5)

# Build and compile the discriminator

self.discriminator = self.build_discriminator()

self.discriminator.compile(loss='binary_crossentropy',

optimizer=optimizer,

metrics=['accuracy'])

# Build and compile the generator

self.generator = self.build_generator()

self.generator.compile(loss='binary_crossentropy', optimizer=optimizer)

# The generator takes noise as input and generated imgs

z = Input(shape=(100,))

img = self.generator(z)

# For the combined model we will only train the generator

self.discriminator.trainable = False

# The valid takes generated images as input and determines validity

valid = self.discriminator(img)

# The combined model (stacked generator and discriminator) takes

# noise as input => generates images => determines validity

self.combined = Model(z, valid)

self.combined.compile(loss='binary_crossentropy', optimizer=optimizer)

def build_generator(self):

noise_shape = (100,)

model = Sequential()

model.add(Dense(256, input_shape=noise_shape))

model.add(LeakyReLU(alpha=0.2))

model.add(BatchNormalization(momentum=0.8))

model.add(Dense(512))

model.add(LeakyReLU(alpha=0.2))

model.add(BatchNormalization(momentum=0.8))

model.add(Dense(1024))

model.add(LeakyReLU(alpha=0.2))

model.add(BatchNormalization(momentum=0.8))

model.add(Dense(np.prod(self.img_shape), activation='tanh'))

model.add(Reshape(self.img_shape))

model.summary()

noise = Input(shape=noise_shape)

img = model(noise)

return Model(noise, img)

def build_discriminator(self):

img_shape = (self.img_rows, self.img_cols, self.channels)

model = Sequential()

model.add(Flatten(input_shape=img_shape))

model.add(Dense(512))

model.add(LeakyReLU(alpha=0.2))

model.add(Dense(256))

model.add(LeakyReLU(alpha=0.2))

model.add(Dense(1, activation='sigmoid'))

model.summary()

img = Input(shape=img_shape)

validity = model(img)

return Model(img, validity)

DCGAN - Deep Convolutional Generative Adversarial Network

Paper: https://arxiv.org/abs/1511.06434

Code: https://github.com/carpedm20/DCGAN-tensorflow

Tutorial: DCGAN Tutorial

MrCGAN

Paper: Compatibility Family Learning for Item Recommendation and Generation

Code: https://github.com/appier/compatibility-family-learning

pix2pix

Paper: Image-to-Image Translation with Conditional Adversarial Networks

Code: https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

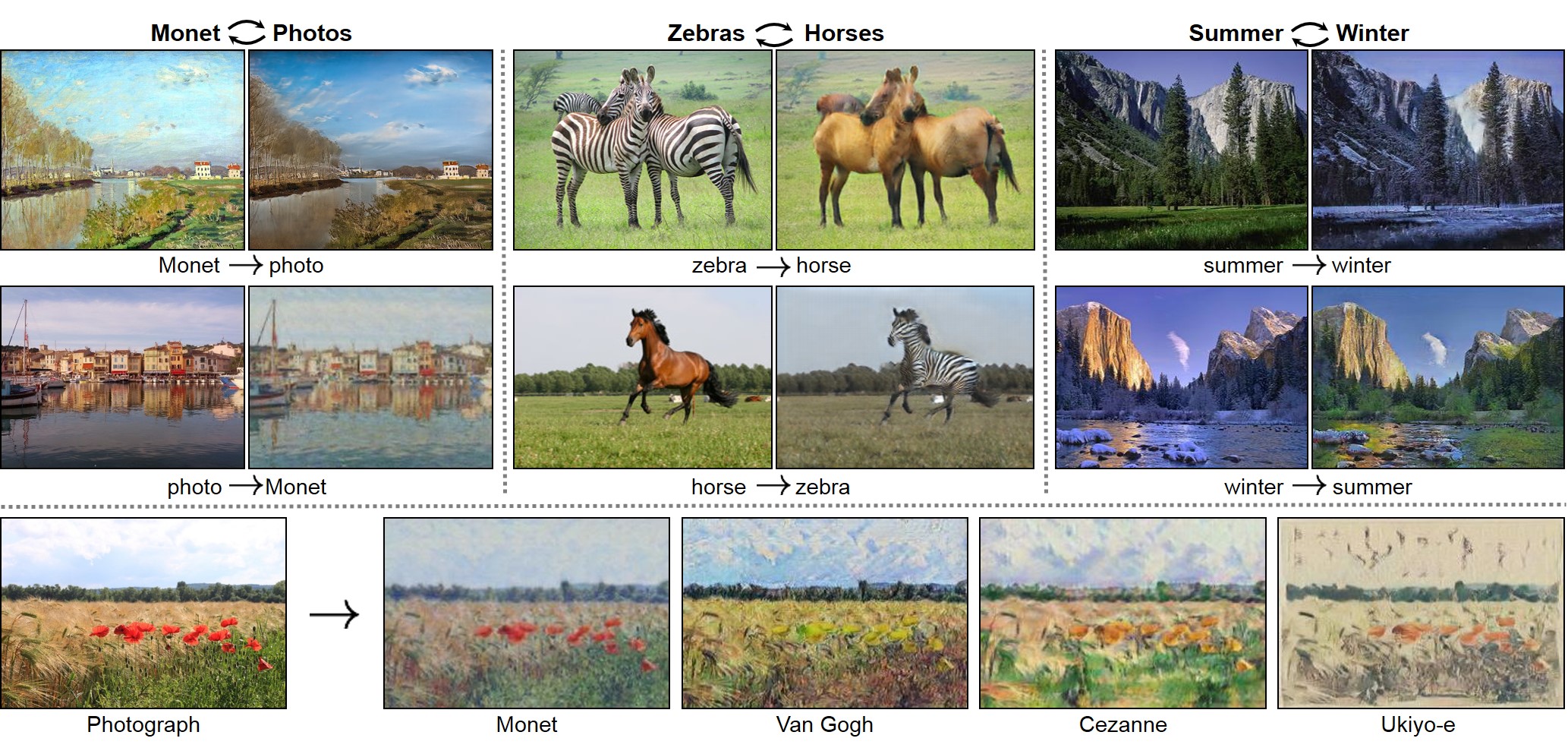

CycleGAN

Paper: Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks

Code: https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

Tutorial: https://www.tensorflow.org/tutorials/generative/cyclegan

pix2pixHD

Paper: High-Resolution Image Synthesis and Semantic Manipulation with Conditional GANs

Code: https://github.com/NVIDIA/pix2pixHD

Recycle-GAN

Paper: Recycle-GAN: Unsupervised Video Retargeting

Code: https://github.com/aayushbansal/Recycle-GAN

Glow

Blog: https://openai.com/blog/glow/

Paper: Glow: Generative Flow with Invertible 1x1 Convolutions

Code: https://github.com/openai/glow

GANimation

Paper: GANimation: Anatomically-aware Facial Animation from a Single Image

Code: https://github.com/albertpumarola/GANimation

StyleGAN

Paper: A Style-Based Generator Architecture for Generative Adversarial Networks

Code: https://github.com/NVlabs/stylegan

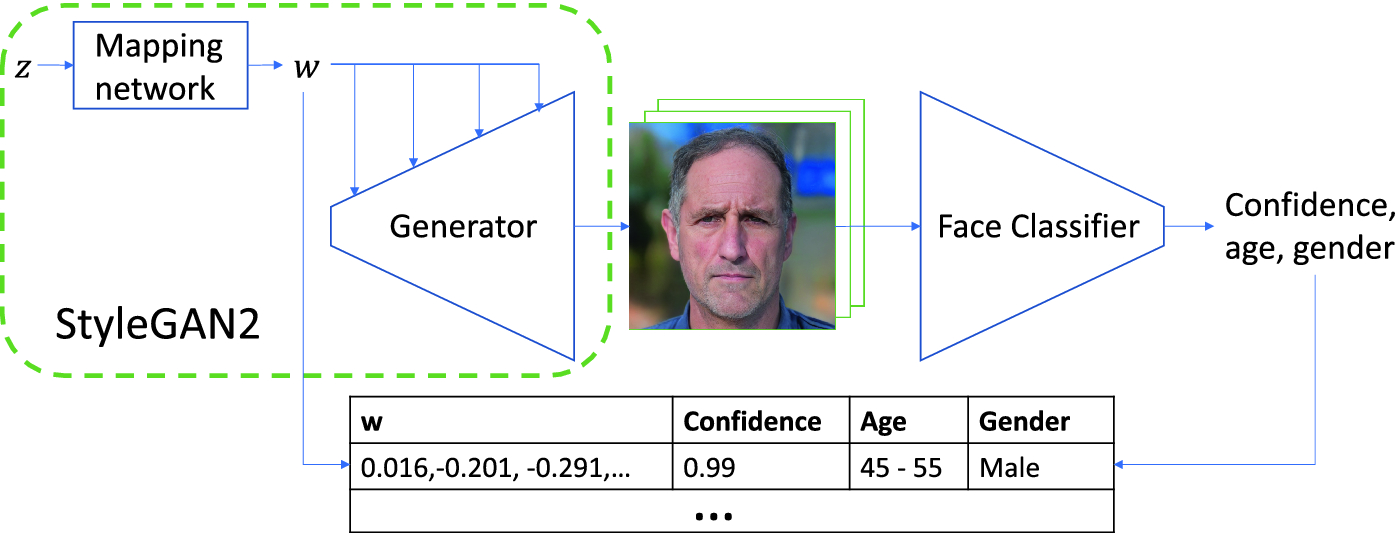

StyleGAN 2

Blog: Understanding the StyleGAN and StyleGAN2 Architecture

Paper: A Style-Based Generator Architecture for Generative Adversarial Networks

Code: https://github.com/NVlabs/stylegan2-ada-pytorch

StyleGAN2-ADA

Paper: Training Generative Adversarial Networks with Limited Data

Code: https://github.com/NVlabs/stylegan2-ada-pytorch

StyleGAN2 Distillation

Paper: StyleGAN2 Distillation for Feed-forward Image Manipulation

Code: https://github.com/EvgenyKashin/stylegan2-distillation

|

|

SideGAN

Paper: SideGAN: 3D-Aware Generative Model for Improved Side-View Image Synthesis

Toonify

Blog: StyleGAN network blending

Paper: Resolution Dependent GAN Interpolation for Controllable Image Synthesis Between Domains

Code: https://github.com/justinpinkney/toonify

pix2style2pix

Code: eladrich/pixel2style2pixel

![]()

![]()

Face Datasets

Celeb-A HQ Dataset

Flickr-Faces-HQ Dataset (FFHQ)

MetFaces dataset

Animal Faces-HQ dataset (AFHQ)

Animal Faces-HQ (AFHQ), consisting of 15,000 high-quality images at 512×512 resolution

The dataset includes three domains of cat, dog, and wildlife, each providing about 5000 images.

Ukiyo-e Faces

Cartoon Faces

Sefa

Paper: Closed-Form Factorization of Latent Semantics in GANs

Code: https://github.com/genforce/sefa

Kaggle: https://www.kaggle.com/code/rkuo2000/genforce-sefa

| Pose | Mouth | Eye |

|

|

|

Catoonization

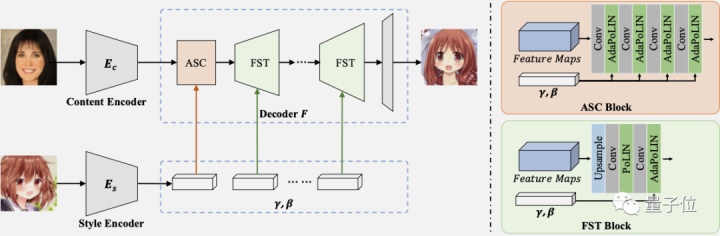

AniGAN

Blog: 博士後小姐姐把「二次元老婆生成器」升級了:這一次可以指定畫風

Paper: AniGAN: Style-Guided Generative Adversarial Networks for Unsupervised Anime Face Generation

CartoonGAN

Code: https://github.com/mnicnc404/CartoonGan-tensorflow

Kaggle: https://www.kaggle.com/code/rkuo2000/cartoongan/notebook

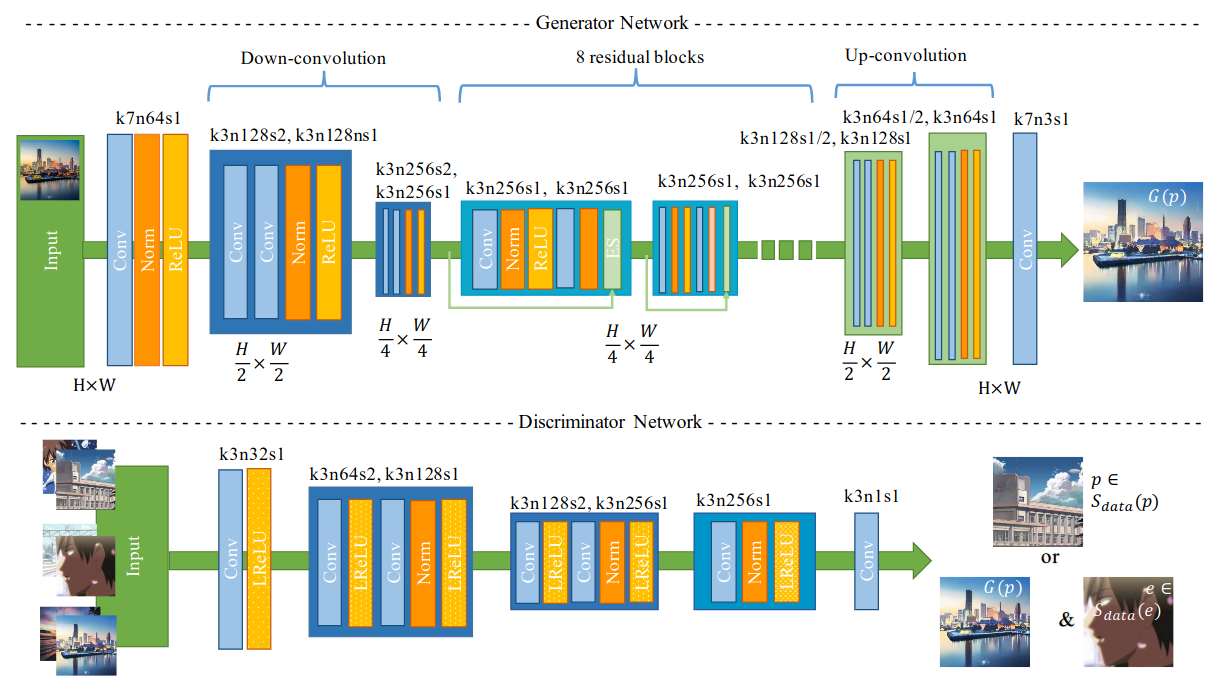

Cartoon-GAN

Paper: Generative Adversarial Networks for photo to Hayao Miyazaki style cartoons

Code: FilipAndersson245/cartoon-gan

Kaggle: https://www.kaggle.com/code/rkuo2000/cartoon-gan

White-box Cartoonization

Paper: White-Box Cartoonization Using An Extended GAN Framework

Code: SystemErrorWang/White-box-Cartoonization

Code: White-box facial image cartoonizaiton

Super-Resolutioin

Survey/Review

Paper: From Beginner to Master: A Survey for Deep Learning-based Single-Image Super-Resolution

Paper: A Review of Deep Learning Based Image Super-resolution Techniques

Paper: NTIRE 2023 Challenge on Light Field Image Super-Resolution: Dataset, Methods and Results

SRGAN

Paper: Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network

Code: tensorlayer/srgan

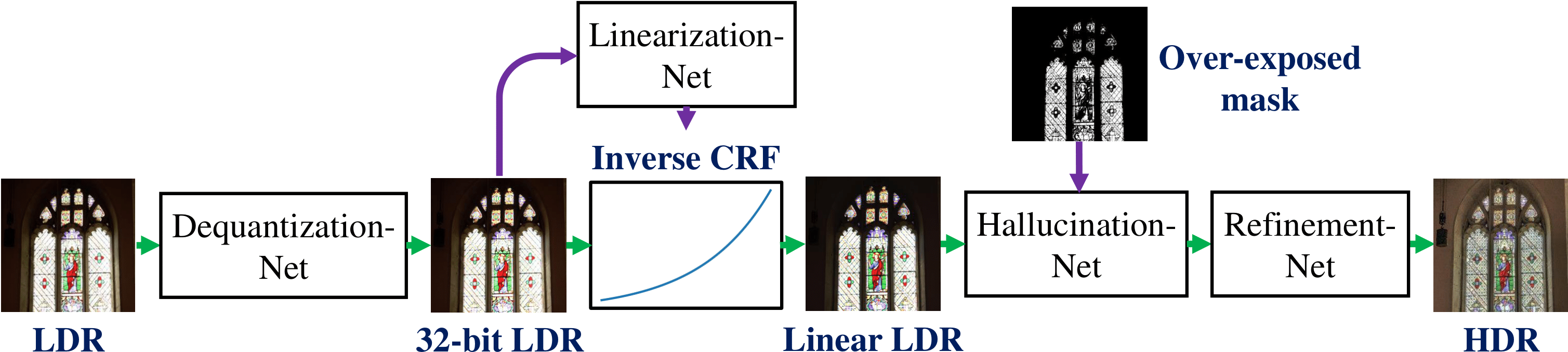

SingleHDR

Paper: Single-Image HDR Reconstruction by Learning to Reverse the Camera Pipeline

Code: https://github.com/alex04072000/SingleHDR

Image Inpainting

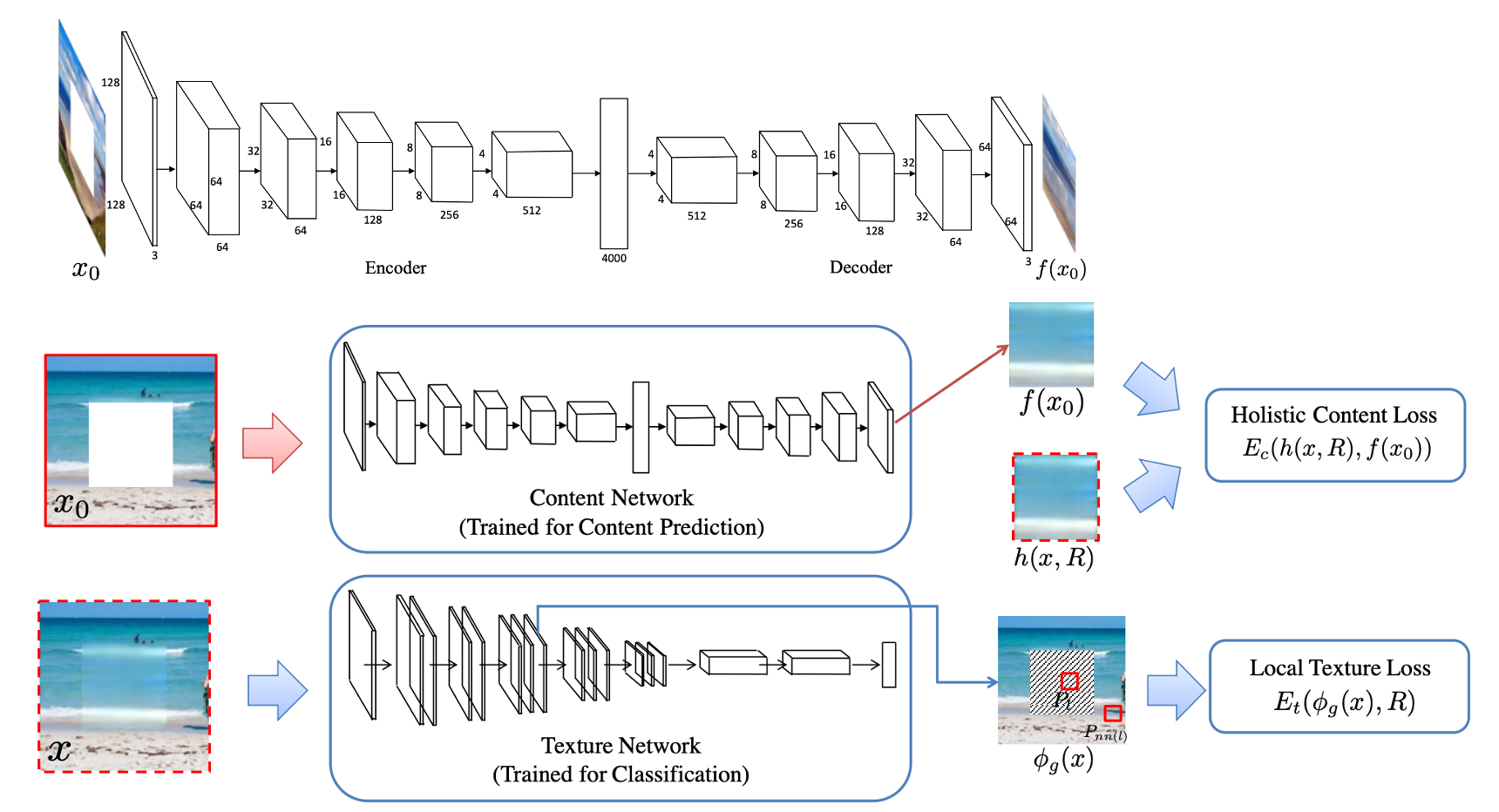

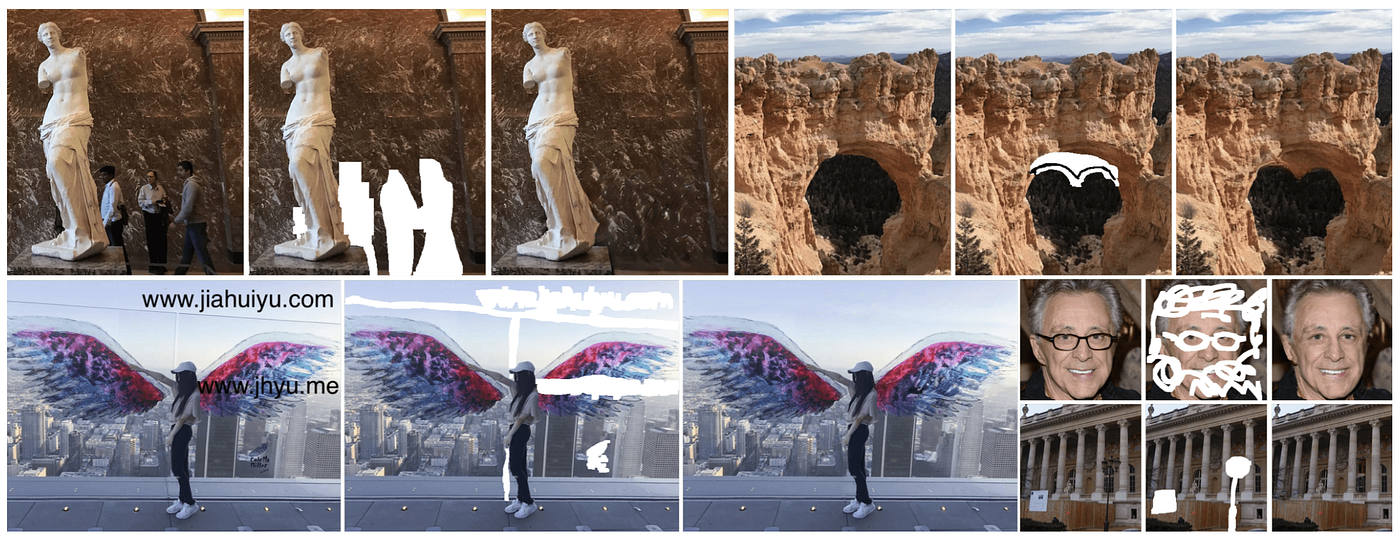

High-Resolution Image Inpainting

Blog: Review: High-Resolution Image Inpainting using Multi-Scale Neural Patch Synthesis

Paper: High-Resolution Image Inpainting using Multi-Scale Neural Patch Synthesis

Code: https://github.com/leehomyc/Faster-High-Res-Neural-Inpainting

Image Inpainting for Irregular Holes

Paper: Image Inpainting for Irregular Holes Using Partial Convolutions

Code: https://github.com/NVIDIA/partialconv

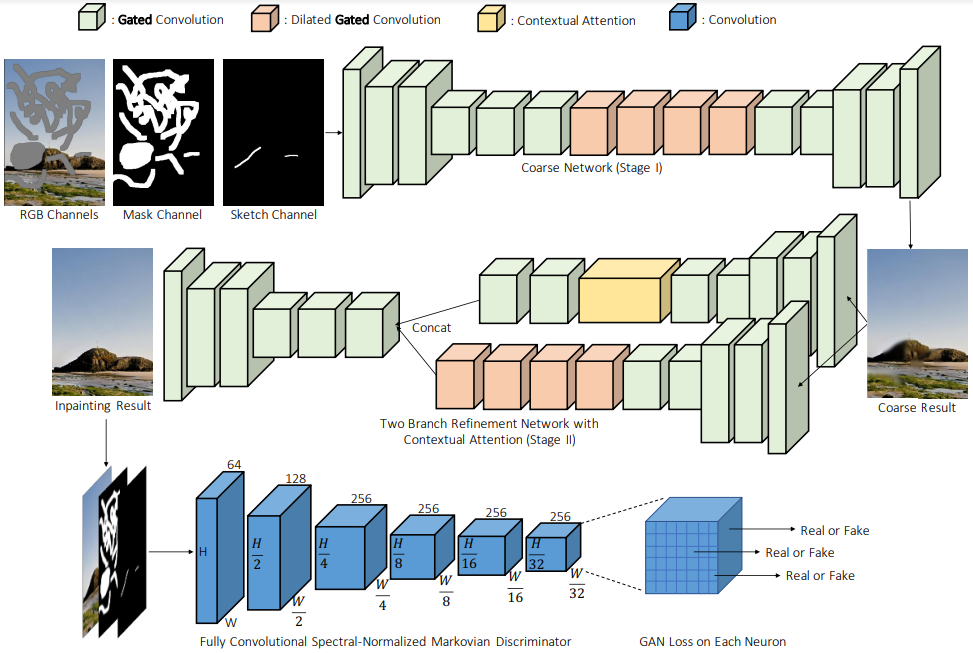

DeepFill V2

Paper: https://arxiv.org/abs/1806.03589

Code: https://github.com/JiahuiYu/generative_inpainting

GauGAN

Paper: Semantic Image Synthesis with Spatially-Adaptive Normalization

Code: NVlabs/SPADE

|

|

LaMa

Paper: Resolution-robust Large Mask Inpainting with Fourier Convolutions

Code: https://github.com/advimman/lama

Inpaint Anything

Paper: Inpaint Anything: Segment Anything Meets Image Inpainting

Code: https://github.com/geekyutao/inpaint-anything

Kaggle: https://www.kaggle.com/code/rkuo2000/inpaint-anything

Kaggle: https://www.kaggle.com/code/rkuo2000/inpaint-anything

Remove Anything

Kaggle: https://www.kaggle.com/code/rkuo2000/remove-anything

Replace Anything

Kaggle: https://www.kaggle.com/code/rkuo2000/replace-anything

T-former

Paper: T-former: An Efficient Transformer for Image Inpainting

Paper: https://github.com/dengyecode/T-former_image_inpainting

NeRF Inpainting

Paper: NeRF-In: Free-Form NeRF Inpainting with RGB-D Priors

Code: https://github.com/hitachinsk/NeRF-Inpainting

Video Inpaiting

Deep Flow-Guided Video Inpainting

Paper: Deep Flow-Guided Video Inpainting

Code: https://github.com/nbei/Deep-Flow-Guided-Video-Inpainting

Flow-edge Guided Video Completion

Paper: https://arxiv.org/abs/2009.01835

Code: https://github.com/vt-vl-lab/FGVC

FGT

Paper: Flow-Guided Transformer for Video Inpainting

Code: https://github.com/hitachinsk/FGT

E2FGVI

Paper: Towards An End-to-End Framework for Flow-Guided Video Inpainting

Code: https://github.com/MCG-NKU/E2FGVI

FGT++

Paper: Exploiting Optical Flow Guidance for Transformer-Based Video Inpainting

One-Shot Video Inpainting

Paper: One-Shot Video Inpainting

Infusion

Paper: Infusion: Internal Diffusion for Video Inpainting

Pose GAN

Pose-guided Person Image Generation

Paper: Pose Guided Person Image Generation

Code: charliememory/Pose-Guided-Person-Image-Generation

PoseGAN

Paper: Deformable GANs for Pose-based Human Image Generation

Code: AliaksandrSiarohin/pose-gan

Everybody Dance Now

Blog: https://carolineec.github.io/everybody_dance_now/

Paper: Everybody Dance Now

Code: carolineec/EverybodyDanceNow

PCDM

Paper: Advancing Pose-Guided Image Synthesis with Progressive Conditional Diffusion Models

Code: https://github.com/muzishen/PCDMs

Virtual Try On

VITON

Paper: VITON: An Image-based Virtual Try-on Network

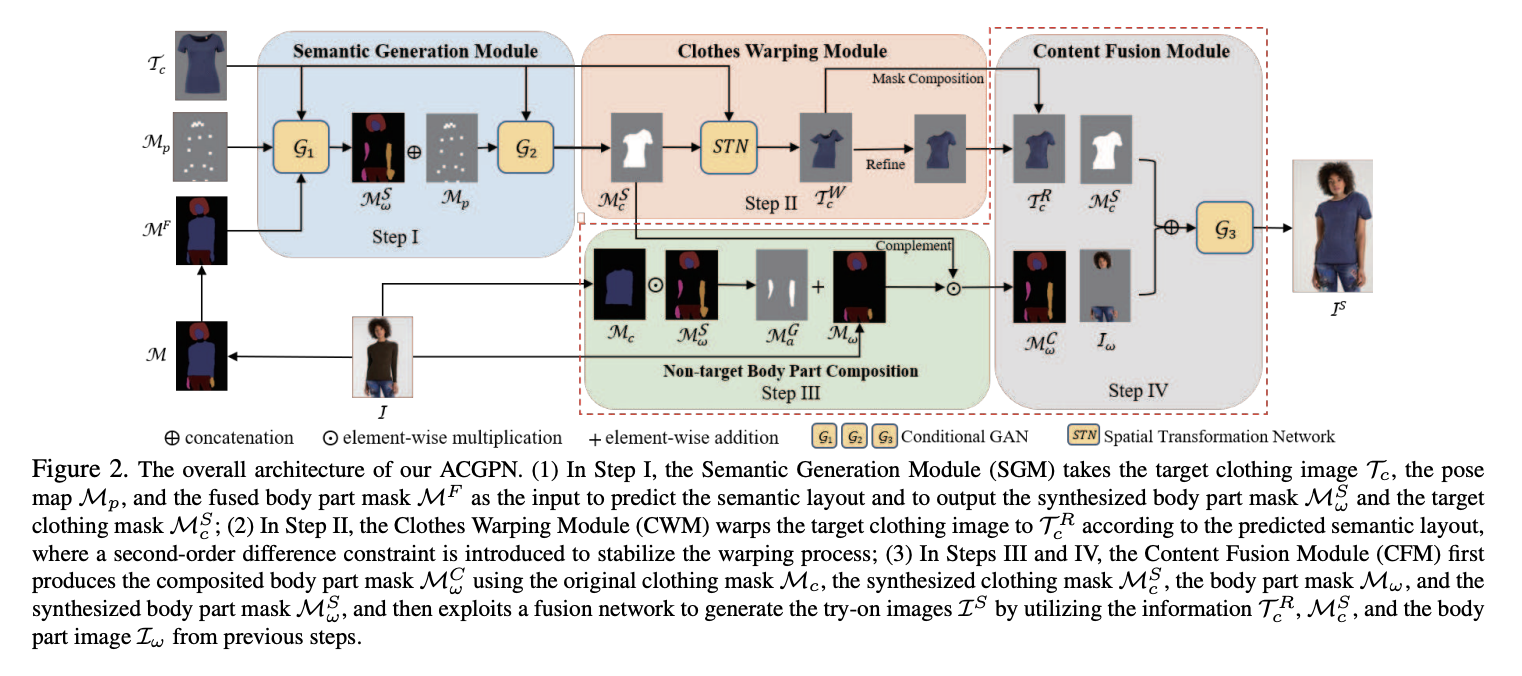

ACGPN

Paper: Towards Photo-Realistic Virtual Try-On by Adaptively Generating↔Preserving Image Content

Code: switchablenorms/DeepFashion_Try_On

CP-VTON+

Paper: CP-VTON+: Clothing Shape and Texture Preserving Image-Based Virtual Try-On

Code: minar09/cp-vton-plus

O-VITON

Paper: Image Based Virtual Try-on Network from Unpaired Data

Code: trinanjan12/Image-Based-Virtual-Try-on-Network-from-Unpaired-Data

PF-AFN

Paper: Parser-Free Virtual Try-on via Distilling Appearance Flows

Code: geyuying/PF-AFN

pix2surf

Paper: Learning to Transfer Texture from Clothing Images to 3D Humans

Code: polobymulberry/pix2surf

TailorNet

Paper: TailorNet: Predicting Clothing in 3D as a Function of Human Pose, Shape and Garment Style

Code: chaitanya100100/TailorNet

NeRF

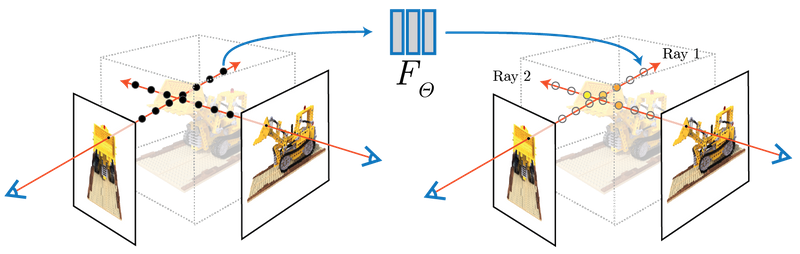

NeRF:Representing Scenes as Neural Radiance Fields for View Synthesis

Paper: arxiv.org/abs/2003.08934

Code: bmild/nerf

Colab: tiny_nerf

Kaggle: rkuo2000/tiny-nerf

The algorithm represents a scene using a fully-connected (non-convolutional) deep network, whose input is a single continuous 5D coordinate (spatial location (x, y, z) and viewing direction (θ, φ)) and whose output is the volume density and view-dependent emitted radiance at that spatial location.

We synthesize views by querying 5D coordinates along camera rays and use classic volume rendering techniques to project the output colors and densities into an image. Because volume rendering is naturally differentiable, the only input required to optimize our representation is a set of images with known camera poses. We describe how to effectively optimize neural radiance fields to render photorealistic novel views of scenes with complicated geometry and appearance, and demonstrate results that outperform prior work on neural rendering and view synthesis.

We synthesize views by querying 5D coordinates along camera rays and use classic volume rendering techniques to project the output colors and densities into an image. Because volume rendering is naturally differentiable, the only input required to optimize our representation is a set of images with known camera poses. We describe how to effectively optimize neural radiance fields to render photorealistic novel views of scenes with complicated geometry and appearance, and demonstrate results that outperform prior work on neural rendering and view synthesis.

FastNeRF

Paper: FastNeRF: High-Fidelity Neural Rendering at 200FPS

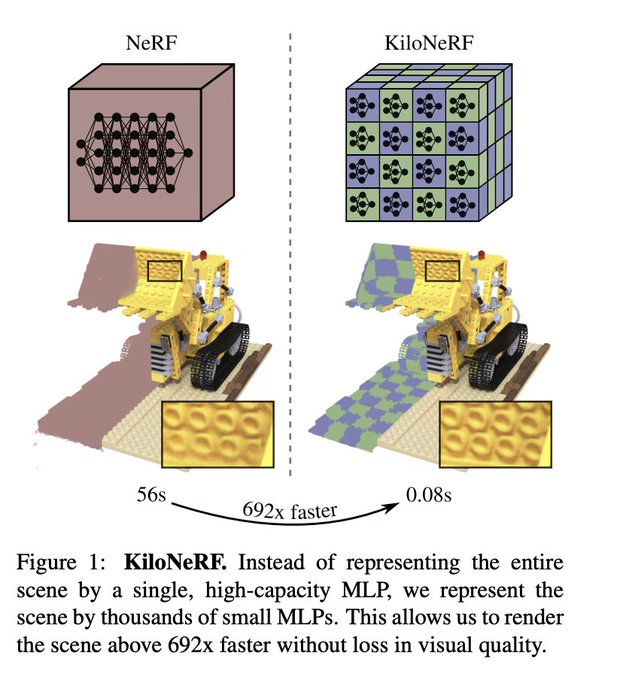

KiloNeRF

Paper: KiloNeRF: Speeding up Neural Radiance Fields with Thousands of Tiny MLPs

Code: creiser/kilonerf

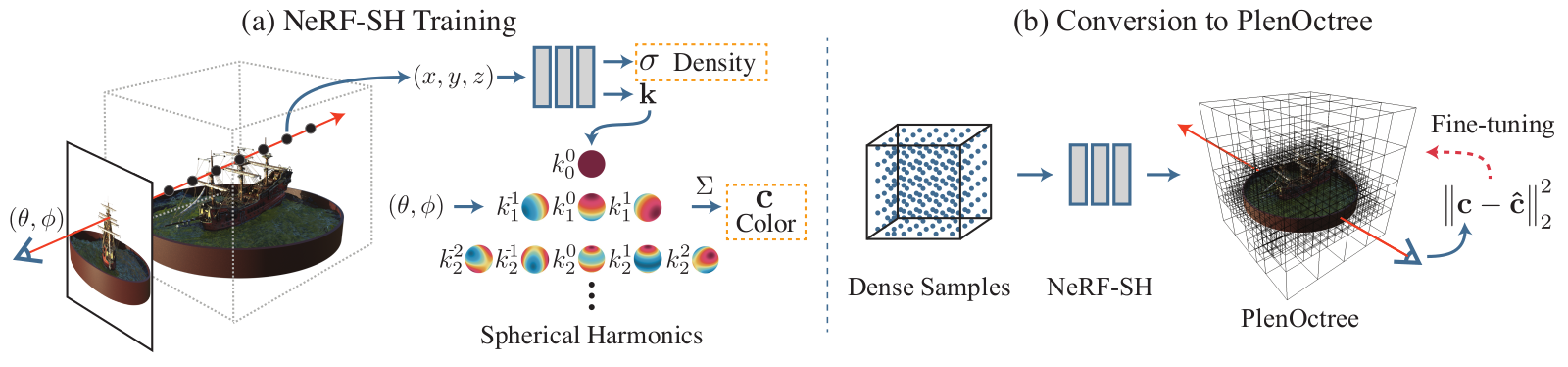

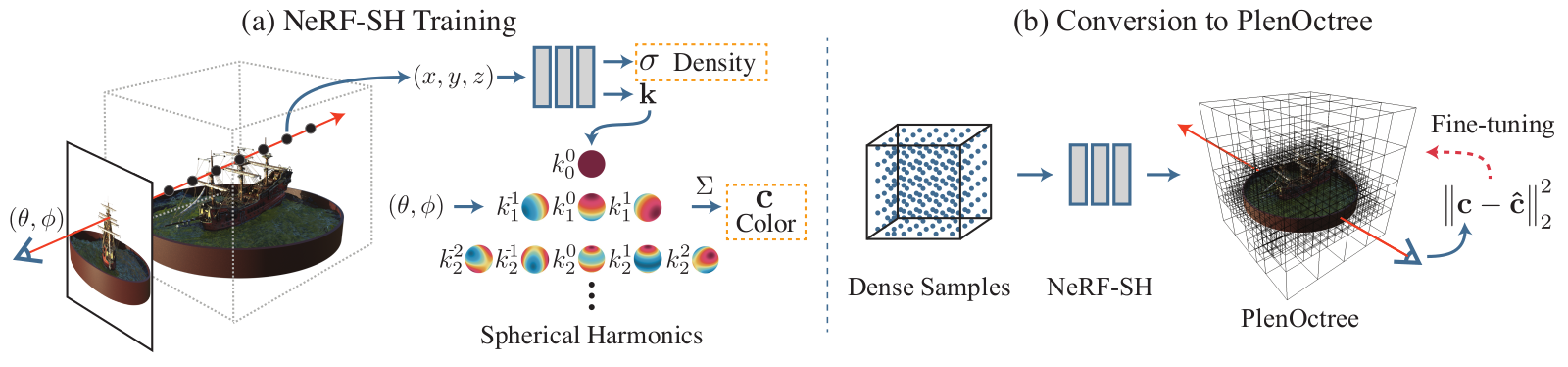

PlenOctrees

Paper: PlenOctrees for Real-time Rendering of Neural Radiance Fields

Code: NeRF-SH training and conversion & Volume Rendering

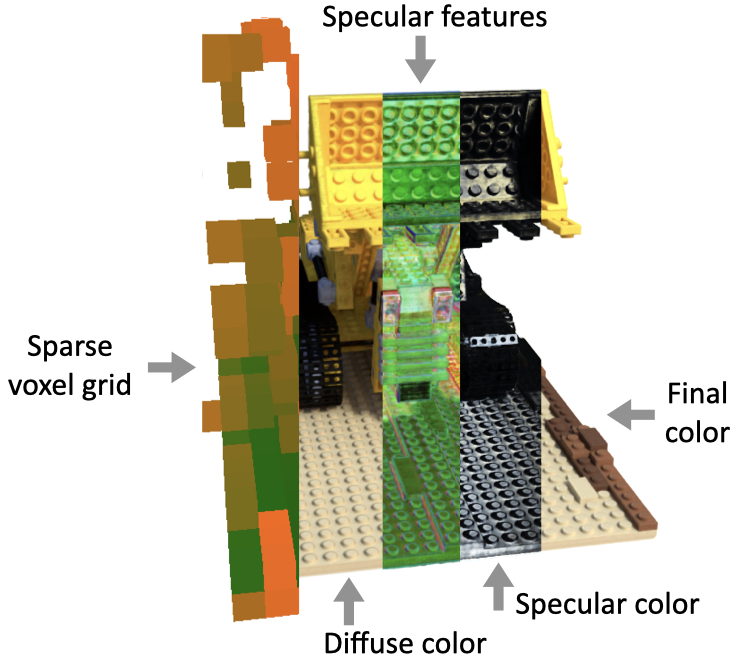

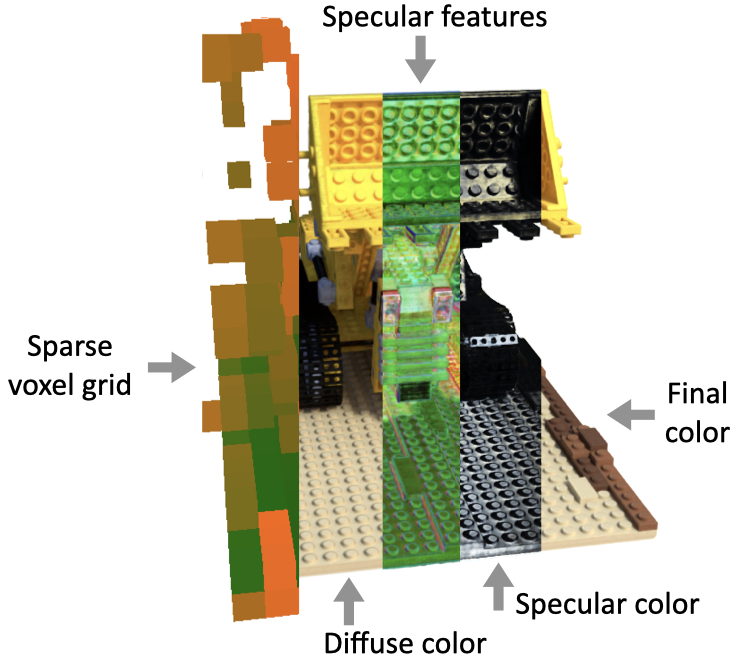

Sparse Neural Radiance Grids (SNeRG)

Paper: Baking Neural Radiance Fields for Real-Time View Synthesis

Code: google-research/snerg

Faster Neural Radiance Fields Inference

- NeRF Inference: Probabilistic Approach

- Faster Inference: Efficient Ray-Tracing + Image Decomposition

| Method Render | time | Speedup |

| NeRF | 56185 ms | – |

| NeRF + ESS + ERT | 788 ms | 71 |

| KiloNeRF | 22 ms | 2548 |

- Sparse Voxel Grid and Octrees: Spatial Sparsity

- Neural Sparse Voxel Fields proposed learn a sparse voxel grid in a progressive manner that increases the resolution of the voxel grid at a time to not just such represent explicit geomety but also to learn the implicit features per non-empty voxel.

- PlenOctree also uses the octree structure to speed up the geometry queries and store the view-dependent color representation on the leaves.

- KiloNeRF proposes to decompose this large deep NeRF into a set of small shallow NeRFs that capture only a small portion of the space.

- Baking Neural Radiance Fields (SNeRG) proposes to decompose an image into the diffuse color and specularity so that the inference network handles a very simple task.

Point-NeRF

Paper: Point-NeRF: Point-based Neural Radiance Fields

Code: Xharlie/pointnerf

Point-NeRF uses neural 3D point clouds, with associated neural features, to model a radiance field.

Point-NeRF uses neural 3D point clouds, with associated neural features, to model a radiance field.

SqueezeNeRF

Paper: SqueezeNeRF: Further factorized FastNeRF for memory-efficient inference

Nerfies: Deformable Neural Radiance Fields

Paper: Nerfies: Deformable Neural Radiance Fields

Code: google/nerfies

Light Field Networks

Paper: Light Field Networks: Neural Scene Representations with Single-Evaluation Rendering

Code: Light Field Networks

NeRFPlayer

Paper: NeRFPlayer: A Streamable Dynamic Scene Representation with Decomposed Neural Radiance Fields

https://camo.githubusercontent.com/2ecb6fc0e37869b741b869365c483eaf4b19ad42c11acc152319db9922e2a955/68747470733a2f2f696d672e796f75747562652e636f6d2f76692f666c5671534c5a57424d492f302e6a7067

3D Avatar

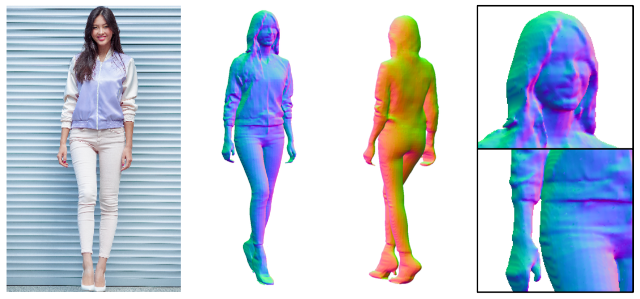

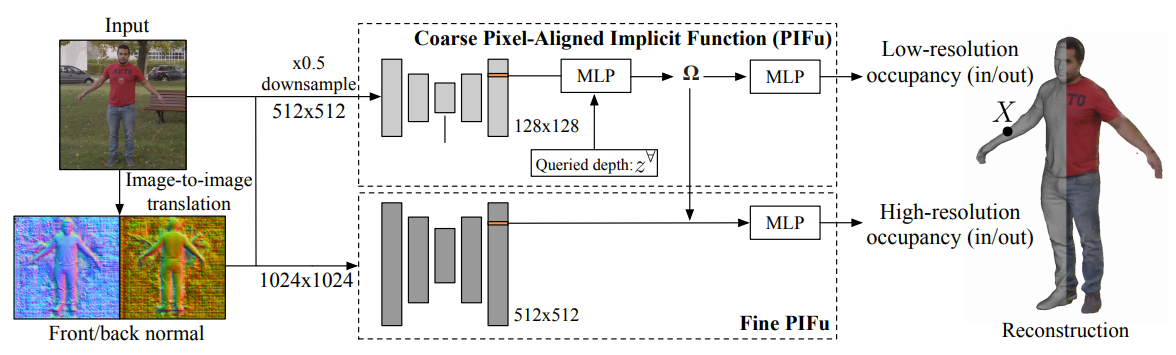

PIFuHD: Multi-Level Pixel-Aligned Implicit Function for High-Resolution 3D Human Digitization

Blog: PIFuHD: Multi-Level Pixel-Aligned Implicit Function for High-Resolution 3D Human Digitization

Paper: PIFuHD: Multi-Level Pixel-Aligned Implicit Function for High-Resolution 3D Human Digitization

Code: facebookresearch/pifuhd

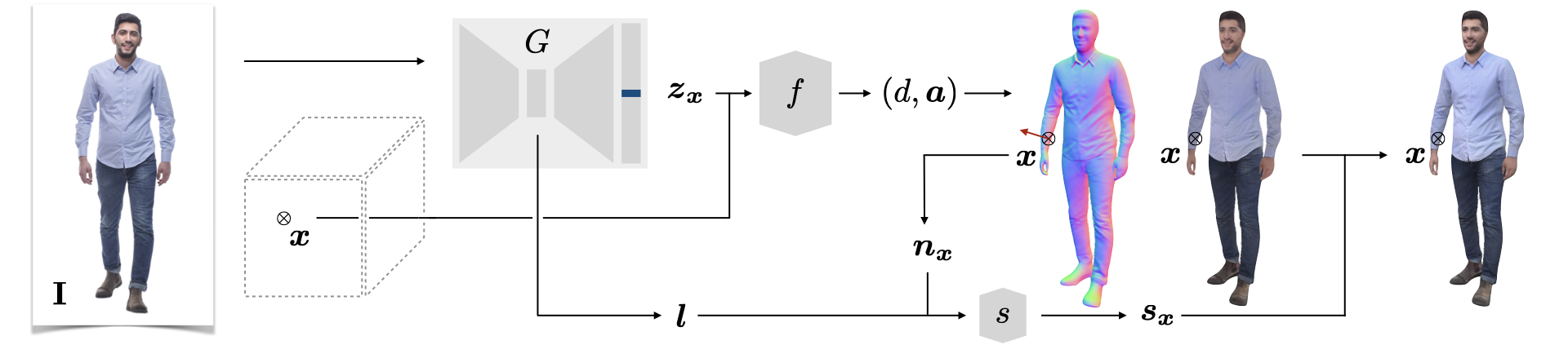

gDNA: Towards Generative Detailed Neural Avatars

Blog: PIFuHD: Multi-Level Pixel-Aligned Implicit Function for High-Resolution 3D Human Digitization

Paper: gDNA: Towards Generative Detailed Neural Avatars

To generate diverse 3D humans, we build an implicit multi-subject articulated model. We model clothed human shapes and detailed surface normals in a pose-independent canonical space via a neural implicit surface representation, conditioned on latent codes.

To generate diverse 3D humans, we build an implicit multi-subject articulated model. We model clothed human shapes and detailed surface normals in a pose-independent canonical space via a neural implicit surface representation, conditioned on latent codes.

Phorhum

Paper: Photorealistic Monocular 3D Reconstruction of Humans Wearing Clothing

Face Swap

faceswap-GAN

Code: https://github.com/shaoanlu/faceswap-GAN

DeepFake

Paper: DeepFaceLab: Integrated, flexible and extensible face-swapping framework

Github: iperov/DeepFaceLab

DeepFake Detection Challenge

ObamaNet

Paper: ObamaNet: Photo-realistic lip-sync from text

Code: acvictor/Obama-Lip-Sync

Talking Face

Paper: Talking Face Generation by Adversarially Disentangled Audio-Visual Representation

Code: Hangz-nju-cuhk/Talking-Face-Generation-DAVS

Neural Talking Head

Blog: Creating Personalized Photo-Realistic Talking Head Models

Paper: Few-Shot Adversarial Learning of Realistic Neural Talking Head Models

Code: vincent-thevenin/Realistic-Neural-Talking-Head-Models

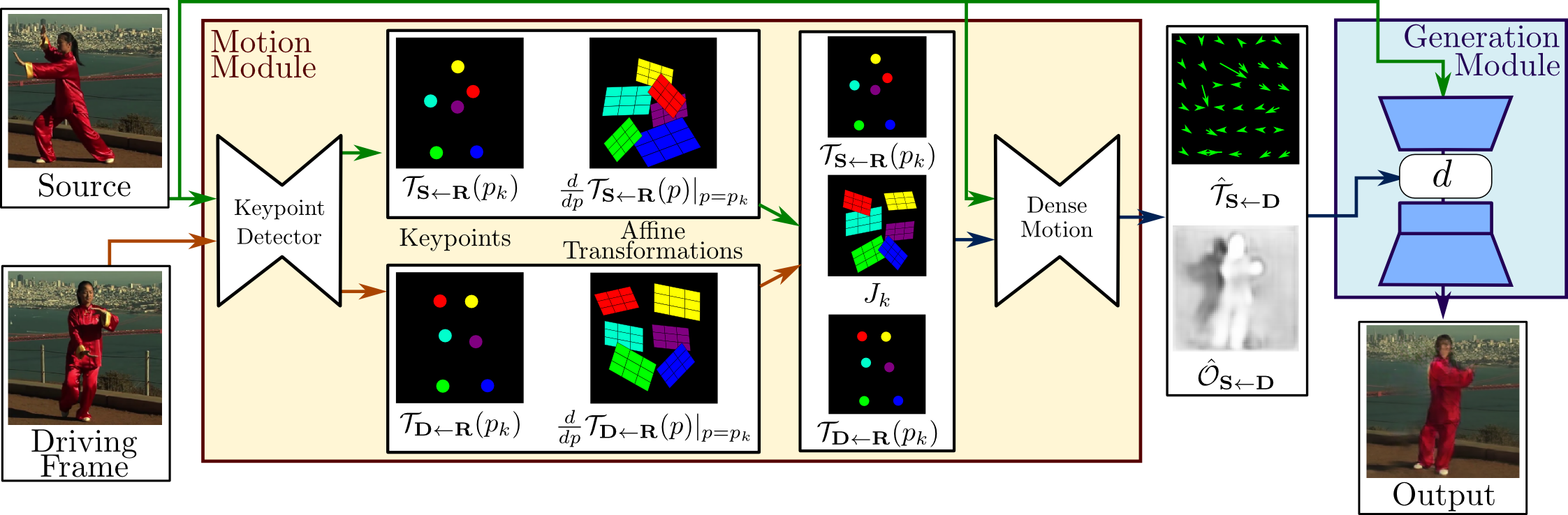

First Order Model

Blog: First Order Motion Model for Image Animation

Paper: First Order Motion Model for Image Animation

Code: AliaksandrSiarohin/first-order-model

This site was last updated June 29, 2024.